The full Airflow DAG itself I won't post, but in the excerpt below I show how to use the filename in the DAG. The nice thing here is that I'm actually passing the filename of the new file to Airflow, which I can use in the DAG lateron. You need to adjust the AIRFLOW_URL, DAG_NAME, AIRFLOW_USER, and AIRFLOW_PASSWORD.

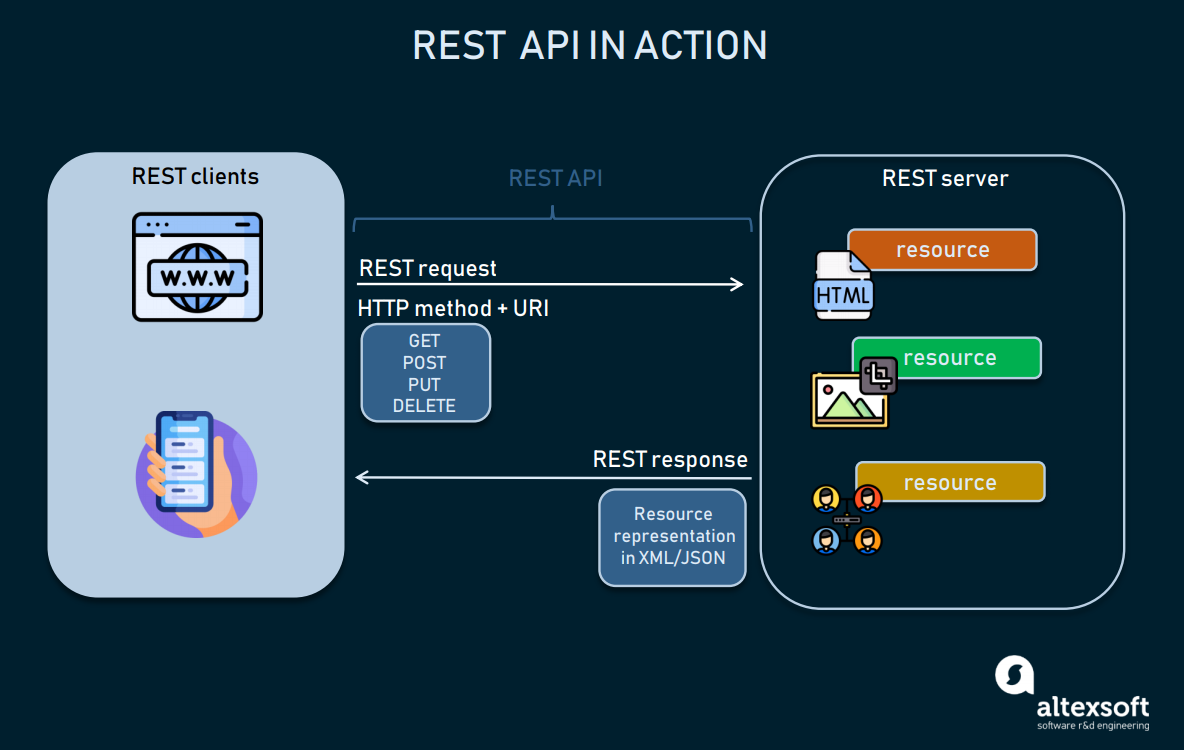

Stay tuned to the following article in which we’ll discuss Airflow and Amazon S3 connection.Var request = require ( 'request' ) module. Examples include a specific file landing in HDFS or S3, a partition appearing in Hive, or a specific time of the day. The sensor definition follows as taken from the documentation: Sensors are a certain type of operator that will keep running until a certain criterion is met. You know how to do it by now, and it will come as a good practice. There is no such thing as a callback or webhook sensor in Airflow. I’ll leave task dependencies and running the task through the Airflow webserver up to you. The best practice is to check if the API is available first, so you don’t think there’s something wrong with the parsing logic if it isn’t. Today you’ve learned how to communicate with REST APIs in Apache Airflow. Let’s make a brief summary before wrapping things up. Image 6 - Saved posts in JSON format (image by author)Īll of the fetched posts were saved to the JSON file, which means all of our tasks work as advertised. It looks like everything went well, which means you should see a posts.json file in the data folder. Image 5 - Testing the task for saving posts (image by author) Use the following code to declare an HttpSensor - it checks if an API declared earlier in the Airflow configuration is running for the given endpoint - posts/: Add the token as a Bearer token in the Authorization header. I have tried having one task to make a POST API call and Another task to keep on calling the. And Need to make GET API calls to check the status of the execution and have to make that call until the execution gets completed. Not checking if API is available could result in you searching for bugs in the wrong places. This token you can be used when you make API requests to airflow. Im quite new to Airflow, I need to make asynchronous POST API calls to start the execution of the external service. Why? The reason is simple - by dividing the logic into two tasks (one checks if the API is available and the other fetches the data) you can know if the DAG failed because API wasn’t up or because there was an error in your code. Sometimes the website you’re connecting to is down, and you should always check for it. Write the Airflow DAGĪ good practice when working with external APIs is to check if they are available first. You now have everything needed to extract the data, so let’s do that next. Feel free to change the connection ID and description, but leave the connection type and host as shown on the image.

The DAG will extract posts JSON data from .in website, which serves as a dummy REST API for testing purposes. Whether you are a beginner or an experienced Airflow user, this step-by-step guide will provide you with the knowledge and tools to seamlessly integrate REST APIs into your workflows in an Airflow. For such use case you better to use Git sync to add files to the DAG directory. Image 1 - Define the HTTP connection in Airflow (image by author) A new option in airflow is the experimental, but built-in, API endpoint in the more recent builds of 1.7 and 1.8.This allows you to run a REST service on your airflow server to listen to a port and accept cli jobs. In simple words by adding such API it means that the machine(s) where DAGs are deployed to need to have credentials to write those DAG files to all the other components. Click on the plus sign to add a new connection, and specify the connection parameters as shown on the image below: This endpoint allows you to interact with your MWAA environment using the Airflow CLI, which can be useful for tasks like triggering DAGs, checking the status. The next step is to open the Airflow homepage and go under Admin - Connections. Schedule_interval =datetime( 2022, 3, 1), Configuring Apache Airflow to Call REST APIs. From .http import HttpSensorįrom .http import SimpleHttpOperatorįrom import PythonOperator

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed